Shifting the Boundaries of the Human Knowledge with AI: Director's Cut (Extras)

Language, Data, LLMs, GenAI, the Endless Loop of Knowledge and a Few Future Predictions

This article is an extension of the original lecture:

Language, Our Oldest Technology and Possibly Our Biggest Bottleneck

Human language is an incredible invention. A compressive, lossy codec for the mind.

It evolved for survival, storytelling, gossip, and cooperation — not for describing quantum fields, multi-dimensional manifolds, or embedding spaces. Yet here we are, trying to use 50,000 years of biological slang to communicate with systems that never lived in a savanna, never feared a tiger, and never needed to impress a tribe around a fire.

This alone creates tension.

Language is:

Ambiguous — almost every word has multiple meanings.

Biocentric — optimized for human perception.

Slow — you can think faster than you can speak.

Shaped by culture — and culture is chaotic.

Compression-heavy — entire models are reduced to single words (“gravity”, “life”).

Constantly mutating — Gen Z invents new words faster than models can retrain.

The problem is not that language is “bad.” The problem is that we mistake it for reality.

And Now We Give This Noisy Communication Protocol to Machines!

This is where the trouble begins.

LLMs inherit all the structural constraints of human language — because that’s their training substrate. You get all the ambiguity, all the imprecision, all the hidden assumptions baked right into the embeddings.

When you talk to a model, you don’t give it “the problem.” You give it a sentence about the problem, in a lossy format, constrained by centuries of linguistic drift.

This is why prompting is hard. This is why we need entire fields like “prompt engineering.” We’re performing surgery with crayons.

Despite all its flaws, language enables alignment between humans and machines.

It is our Rosetta Stone: a shared symbolic space.

Language lets us:

Compress complex models

Transfer abstract concepts

Generate structured hypotheses

Build shared mental models

Map human goals into machine action

The paradox is beautiful: Language is the weakest link — and yet our only link.

Is Our Language Suitable for a Higher Level of World Comprehension?

If humans and AIs ever build a deeper connection, it may require a new kind of language — one that is less ambiguous, more structural, more mathematical. But until then, we must master the language we have. Even our mathematics can prove too human, too simple, too dumb.

Because if we cannot precisely articulate the problem, we should not expect the machine to deliver a precise solution.

Data, LLMs, GenAI, and the Endless Loop of Knowledge

Large language models take the chaotic data and build a structured manifold: a smooth, continuous space of meaning.

They do not “understand.” But they encode meaning in ways that are:

high-dimensional

analogical

relational

compositional

emergent

LLMs build a topology of knowledge that is arguably more coherent than human language itself. This is why they appear intelligent. They are performing geometric reasoning over meaning.

But the tradeoff is severe:

They inherit every weakness of the data.

They inherit every weakness of the language.

They hallucinate where the data is thin.

They produce statistical echoes of our own linguistic biases.

LLMs are powerful — but they are also mirrors.

GenAI is the Surface Layer We Interact With

It’s the part that produces text, code, images, and ideas. What fascinates me here is that GenAI doesn’t just retrieve patterns. It synthesizes, blends, transforms, and abstracts. Sometimes, it even proposes structures we would not have invented ourselves.

Generative models are compressors and decompressors of possible worlds within the boundaries of language.

This is where the golden quadrant becomes real: GenAI allows us to explore the space of plausible models that humans cannot compute manually.

We now have a four-step loop: Data → Model → Generation → New Data.

This is a brand-new epistemic engine to generate knowledge, as I showed at the beginning.

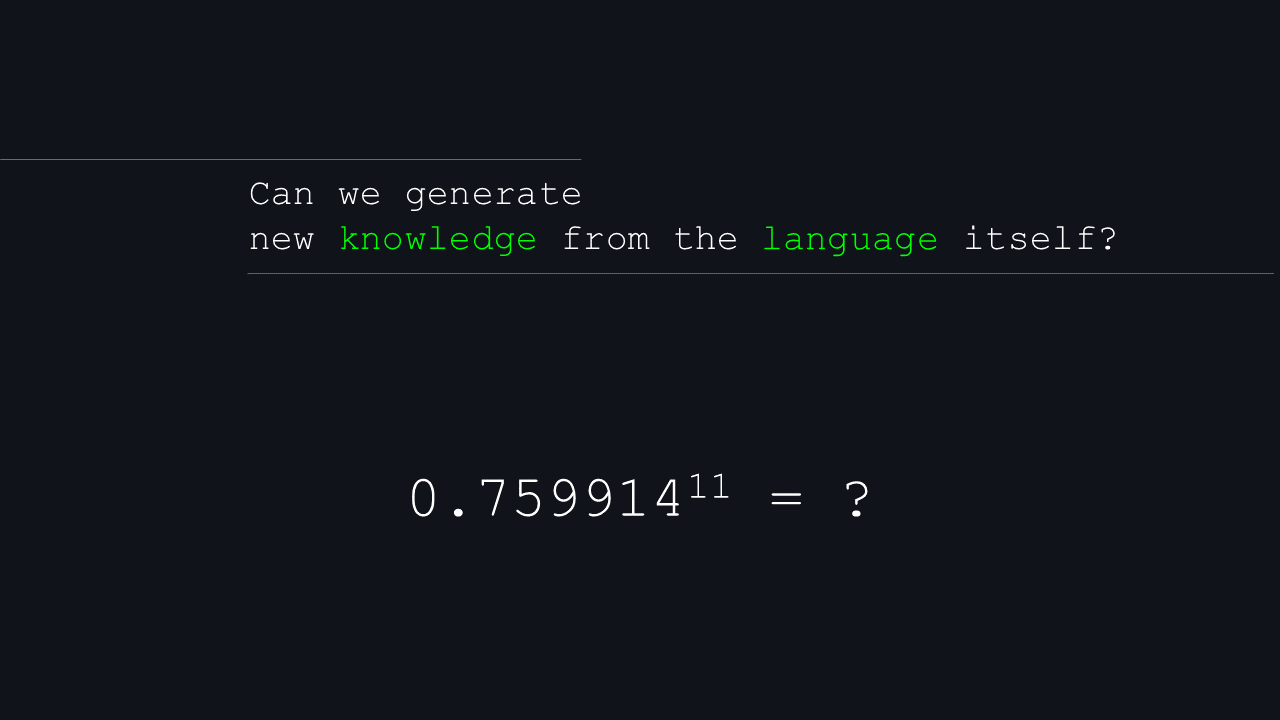

Can This Loop Produce Something Fundamentally New?

The optimistic narrative you often hear goes something like: “Since LLMs recombine language in new ways, they can discover new truths hidden in text.”

But let’s dissect this carefully.

Language is not just a medium but a latent structure of the world.

Over centuries, humans have encoded:

patterns

analogies

causal assumptions

heuristics

models

folk theories

scientific abstractions

Language is a compressed archive of human cognition.

When a model explores this space, it can:

Reveal latent patterns

Suggest missing connections

Propose hypotheses hidden in the structure

Surface correlations we never articulated

Generalize beyond any individual text source

In this sense, yes — the geometry of language can hide new knowledge.

And models can discover it.

But only under a condition: The knowledge must be implicit in the corpus. LLMs cannot transcend what humanity has already baked into the linguistic manifold. They share some of our blind spots.

The moment we try to go beyond implicit human knowledge, we hit a wall.

We have no corpus written by physics itself.

No corpus written by evolution.

No corpus written by spacetime.

No corpus written by the underlying machinery of reality.

Until we build foundation models trained on non-linguistic data — raw sensory streams, real physical interactions, symbolic scientific models — language will remain a ceiling.

LLMs can rearrange our thoughts. They cannot generate thoughts the universe never encoded.

Not yet.

So, if the LLM hasn’t encountered the question with its answer before or can’t use a calculator, it won’t be able to determine the answer.

It also won’t make you a coffee.

Not yet :)

Models may help generate novel hypotheses, but not new truth. The moment a model outputs something surprising, we must still:

Validate it

Embed it into a scientific model

Test it

Use it to make predictions

See if reality agrees

Language alone is insufficient for scientific discovery. But it is an excellent engine for hypothesis generation.

That alone moves the boundaries of human knowledge.

This is the direction foundation models are heading — models that integrate:

spatial data

physical simulation

biological signals

multimodal sensory streams

mathematical structures

symbolic scientific rules

Only then will AI be able to generate knowledge that does not simply remix human thought. Until then, we live inside the linguistic bubble our species built.

Near Future Prediction

Predicting the future is traditionally a dangerous sport — but also an irresistible one.

So let me sketch a few trajectories that seem almost inevitable given the current data, trends, and incentives. These aren’t prophecies. Think of them as models: imperfect, but useful.

Data Privacy Becomes Fiction

Privacy stops being about “what you shared” and becomes about “what can be deduced about you.” With enough data points — many of which you never knew you emitted — models will reconstruct your preferences, intentions, habits, and vulnerabilities with unsettling precision.

Privacy becomes less of a right and more of an illusion we nostalgically describe to younger generations.

Democratization of Hacking

Hacking used to require expertise. Now, it requires instructions in natural language. Agentic models can already chain actions, write malware, and adapt to defenses.

When such tools become cheap and ubiquitous, the attack surface grows; defense becomes a continuous battle of AI versus AI.

A Flood of Deepfakes

Deepfakes are no longer a novelty — they’re becoming background noise.

Soon, the question won’t be “Is this video real?” but “Does it matter whether it’s real?”

We’ll operate in a hybrid epistemic environment where synthetic and authentic realities blend. Institutions, media, governments, and individuals will struggle to maintain trust.

Reality becomes probabilistic, just like truth in machine learning.

Advanced Phishing and Social Engineering

Phishing evolves into personalized, AI-generated psychological operations. Models will craft perfect imitations of voices, faces, writing styles — tailored to each individual’s psyche. Your future inbox may consist mostly of messages written precisely for you, by systems designed to exploit every cognitive bias known to neuroscience.

Trust itself becomes a vulnerability.

Job Displacement and Skill Atrophy

AI won’t eliminate all jobs — but it will eat the middle: tasks requiring moderate expertise, moderate creativity, moderate responsibility. The low-end gets automated; the high-end scales with AI assistance. Meanwhile, humans risk losing skills we once considered foundational — writing, reasoning, even the ability to sit with an open problem without asking an LLM for immediate closure.

We may become cognitively dependent, outsourcing more than we realize.

Cheap Dopamine and Infinite Content

AI becomes the cheapest source of dopamine humanity has ever built: hyper-personalized entertainment, companions, narratives, worlds, and attention loops.

We already have infinite content. Soon we’ll have infinite content made specifically for each person, optimized for maximal retention.

The line between “personalized experience” and “addiction-by-design” thins rapidly.

The Path Toward AGI

We will see:

Autogenous AI: systems capable of improving themselves in constrained domains

Recursive optimization loops

Foundation models trained on multimodal sensory reality, not just language or pictures

I don’t know if this leads to AGI, but I do know that the pace of capability growth is accelerating. And with scale, architecture, and multimodality converging, the magnitude of potential knowledge approaches something that feels uncomfortably close to infinity from our human vantage point — the singularity.

If intelligence keeps scaling and models begin optimizing themselves, the idea of a singularity becomes less science fiction and more a matter of when, not if. Not as an explosion of consciousness — but as a tipping point where:

human pace

biological limits

linguistic constraints

and cognitive bandwidth

simply fail to keep up.

Whether this future is utopian or catastrophic depends less on the models and more on our ability to control the change and adapt.

Thanks for reading!